10x Faster Data Processing with a Master Data Management Solution

Our client wanted to implement a reliable data platform that provides clean customer data and analytics capabilities.

more accurate data in the new system

faster data processing

faster customer service

Business Case Story

The legacy system has been used for more than 25 years and its interface provided very small range of technical functionalities. The client’s team had to convert all incoming data due to incompatible data input. Our client wanted to eliminate this mundane work and let the team focus on high value-added objectives. It would dramatically speed up the data processing. Moreover, the performance of the system was slow because of the source input files which have become much larger in the past decade due to global digitalization processes.

“Our main goal was to develop a complex end-to-end Master Data Management solution that aimed to provide our customer with faster performance, accurate data and regulatory compliance in the long run.

Here are the main challenges we overcame developing the MDM solution:

- Parse out unstructured and semi structured data and load it in standard data warehouse architecture.

- Create multi domain Mastering process which manages Master Data repository with golden records.

- Keep the data and all numerous of different related processes synchronized such as Data Quality load, Master Data load/export, Link/Unlink records trough the Services, receiving records (information) from other systems, and more.”

Ahmed Eshref

Technical Development Team Lead, Adastra

The Story: End-to-End Master Data Management Solution

The master data management solution developed in Ataccama IDE consolidates audio, audio-visual, musical, social media and film data into one Master repository. As an outcome, it provides answers of questions like “How many times the songs of AC/DC are viewed on YouTube in the last week by subscribers from US?”

The new MDM platform incorporates almost full range of the Ataccama DQC (Data Quality Center) and MDC (Master Data Center) features like inbound and outbound processes for connecting with Azure, Streaming, Custom and Generic services, Event Handlers for sync with other systems and Multi-Domain Mastering.

On top of that, Power BI dashboards were built to represent a variety of customer’s data to the end users in an interactive manner. The master data management solution combines into one golden repository a huge variety of incoming data such as more than 30 types of flat files, data from 3rd party systems, queues, via services or from manual input.

The MDM solution sends regular emails to the interested users containing details for the executed processes. There is an Error Handling strategy which works as a try-catch block. If an internal error occurs, the System sends an email with a custom message to the Support team. All email templates can be seamlessly created and customized in Ataccama. They combine basic HTML and specific Ataccama variables.

Phase 1: Data Quality (DQ)

Data Quality represents the first stage of data transformation. The source data is extracted, cleansed, enriched, transformed, validated on rich variety of business rules, and loaded in the Cleansed Layer. This data is further combined into linked groups (Aggregation) and matched against customer’s master data. During the data quality phase, more than 30 types of flatten files, related to radio recordings, audio-visual work, new work registrations, films, series, and social media, are aligned with the typical design of standard data warehouse. Aggregation and Matching processes were developed precisely to meet specific business needs and according to pre-defined requirements by the customer.

Audit and Control Process Orchestration

Our team developed processes for Audit and Control that work like data guards. The implemented Process Orchestration ensures that interruptions of running processes or locking of tables are minimized. The above-mentioned feature is implemented based on dedicated tables which contains data related with:

- Status of the executed process

- Start date and time

- End date and time

- The number of processed records

- Names of the processes

- Related entities if affected

Phase 2: Data Mastering (DM)

Once the data quality phase is completed, the transformed source data is loaded in the Master Data Repository with its specific relations. In the DM stage, there are separate processes of Data Cleansing and Data Matching. The final layer is a Master layer where matched records based on matching rules are merged into a single golden record.

Data Stewards can review the results, edit the records, and eventually turn the updated record back to the golden records repository. The Issue Tracking feature (DQIT for DQ layer and MDAIM for Master layer) uses separate repositories where users can review/update records as well.

The master data management hub has been integrated with many other applications such as web applications, 3rd party services, external systems via stream consumers, database updates, etc. through advanced event handlers configuration that captures all the changes. The later in essence are plans which can be triggered when special criteria are met during cases of insert, delete or update as it happens in the MDM hub. Through them, all such events are captured, and the changes are reflected in real-time to the user interface and all integrated systems. It brings tremendous collaboration capabilities and data reliability for all the users.

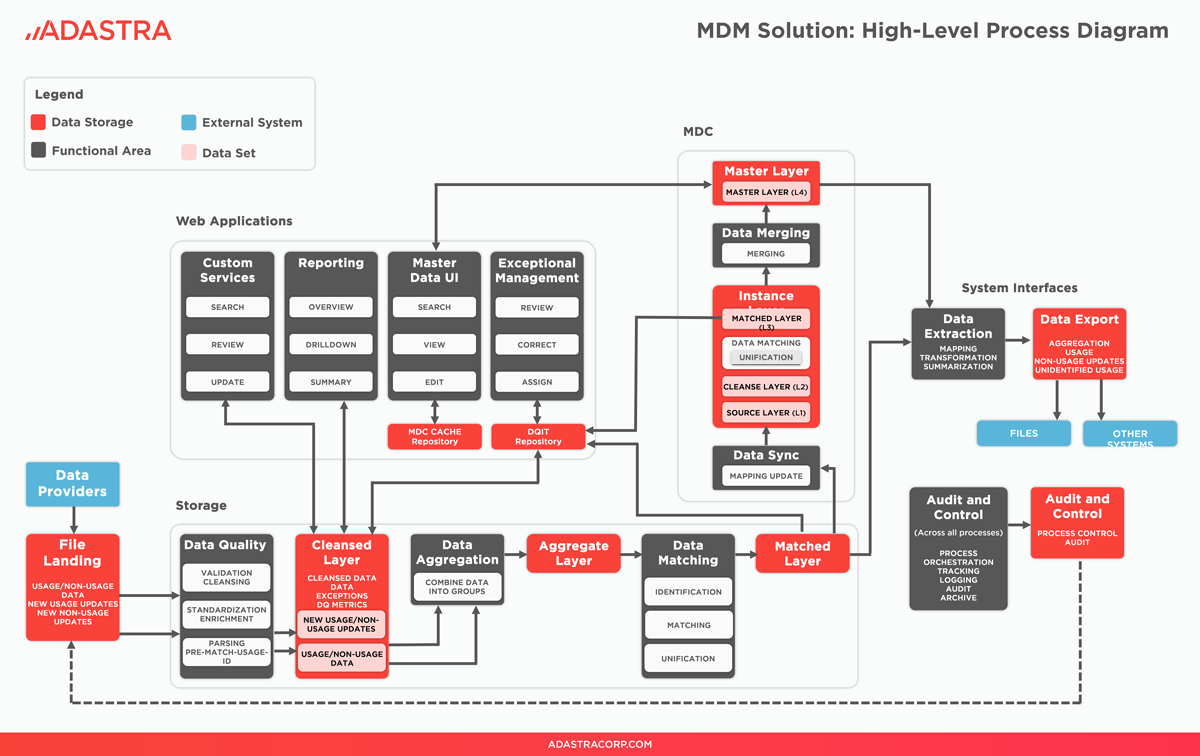

Diagram 1: Master Data Management Solution Architecture

The most powerful side of the Master Data Center (MDC) is the ability to merge or split records based on complicated logic. In general, all matching and merging rules that complement each other ensure the golden record repository is always up-to-date and accurate. The structure of these records is determined by the specific business case and its complex rules.

To ensure that this structure is achieved all matching rules are streamlined to consolidate source data from disparate systems according to the priority, completeness, quality and efficiency. Services use SOAP over HTTP method for message transfer. Its back-end logic is developed in Ataccama. The SQL queries are dynamically generated based on the fields which are chosen as a source input.

Complementary User Interfaces

- Master Data Analysis (MDA) and Master Data Issue Management (MDAIM)

The Data Stewards can review and determine special cases for further data review in the Master Data Issue Management (MDAIM) user interface.

- Web Applications Master Management Center (MMC)

Adastra provided microservices that empowered the MMC that had been developed by our client to provide additional opportunity to the users to review/edit data.

- Reporting Capabilities of the MDM Hub

An advanced layout of the repots is developed in Power BI Report Server. For some of the reports there are additional flat tables which take the role of the source. Specific processes ensure that the data in the flat table will be always up to date. The other part of reports uses stored procedures which source directly from the cleanse, aggregate, matched and master tables. All affected tables were optimized with indexes.

Impact

- 50+% more clean data in the new system

- 10x faster data processing

- 3x faster customer service

The master data management solution developed in Ataccama enabled our client to speed up customer service and process accurate data faster than ever. The MDM platform dynamically recognizes the incoming information and distributes it to its corresponding processes (reading, parsing, writing, aggregation, matching, MDC load).

The MDM solution architecture prevents data loss and notifies the interested parties if an error occurs. An innovative MDC approach organizes all Golden Records in a prestigious library. The high data reliability strengthened the legal compliance of our client in the long run.

The MDM solution provides our client with a range of powerful features such as:

- Master Data Center golden repository that consolidates customer data from disparate systems in variable formats.

- Web interface for Master Data reviewing.

- Web interface for manual issue resolving.

- Web interface for manual matching, merging and splitting Master Data.

- Active monitoring of the processes combined with email notification system.

- Custom SOAP over HTTP services which generate dynamic SQL queries.

- 10-seconds Event Handlers which “catch” incoming updates and keep the data synchronized in real-time.

- Near real-time sync between the Golden Records repository and all 3rd party systems.