Insights

The EU AI Act Explained: A Complete Business Guide to Compliance, Penalties, and Strategic Opportunities

March 26, 2025

Artificial intelligence is now a key force for innovation in many industries. This includes finance, e-commerce, manufacturing, and public services. The EU AI Act is the first complete legal framework for regulating AI systems in the European Union. Its goal is to strike a balance between fostering technological progress and safeguarding fundamental rights, privacy, and safety.

Why the EU AI Act Matters for Your Business

The AI Act has rules and fines. These can significantly impact your company’s legal, operational, and financial performance.

The regulation does not just affect companies that create AI technologies. It also applies to organizations that use or integrate AI tools in their operations. This includes areas like customer service, supply chains, and decision-making systems.

Key Business Implications

Opportunities with AI Compliance

- Streamline operations and reduce costs through intelligent automation

- Improve customer experience with smarter digital services

- Enhance decision-making and accelerate market expansion

- Gain competitive advantage by becoming a trusted, compliant AI adopter

Risks of Non-Compliance

- Failing to meet AI Act requirements can result in reputational damage

- Risk of algorithmic bias and discriminatory outcomes

- Hefty fines and legal consequences for non-compliance

Why Was the EU AI Act Introduced — And What Does It Cover?

The European Union recognizes the strategic importance of artificial intelligence in driving digital transformation and long-term innovation. At the same time, it seeks to prevent scenarios where rapidly evolving AI technologies spiral out of control.

The EU AI Act creates a trust framework. The principles of ethics, law, and safety form the basis. This ensures that innovation stays sustainable and matches European values.

Its goal is to allow progress while keeping data private. It also aims to ensure user safety and fair access to AI services.

A Key Part of Europe’s Digital Strategy

The EU AI Act is not a separate rule. It is part of the larger plan for the European Digital Decade. This plan includes other initiatives like the GDPR and the Data Governance Act.

These laws work together to create a clear and fair environment. This is where developers can create and use data, algorithms, and AI systems responsibly.

Why the EU Decided to Regulate AI: Real-World Failures

The AI Act is a response to real incidents that revealed the dangers of unregulated AI:

Racist chatbots – Poorly trained language models absorbed biased data and generated offensive content.

Discrimination in recruitment – AI hiring tools delivered biased outcomes because of historical prejudice embedded in training data.

Autonomous vehicle crashes – Flaws in image recognition led to safety-critical errors on the road.

The EU AI Act sets clear rules for AI in Europe. It focuses on transparency, safety, and fairness. This applies to both developers and users of AI tools in business.

What Business Leaders Need to Know: Key Impacts of the EU AI Act on Companies

Defining AI vs. Traditional Software

One of the EU AI Act’s core principles is the clear distinction between traditional software and true AI systems. The main question is if your solution works on its own and adapts. Does it learn from data and improve results? Or does it just follow set rules?

Does Your AI Fall Under Regulation?

If your model or tool shows characteristics of machine learning, it is likely subject to the AI Act. This includes neural networks and large language models (LLMs). It also includes regression models, classification algorithms, and other types of AI used in business.

AI Risk Categories

The EU AI Act introduces a risk-based classification system, ranging from prohibited to low-risk AI systems:

• Prohibited practices

These include systems like social scoring, similar to those in China. They also involve manipulating vulnerable groups and other uses that seriously threaten human rights. We must immediately withdraw these systems from use.

• High-risk

This category includes systems that directly affect people’s lives and opportunities — for example, AI in HR (candidate selection, terminations), healthcare (diagnosis, treatment recommendations), or banking (loan approvals). These systems are subject to the strictest requirements for transparency, auditing, and human oversight.

• Medium and low risk

Examples include customer service chatbots, product recommendation engines in e-commerce, or spam filters. These tools must follow basic rules for data protection and clear communication. However, the compliance rules are not as strict as those for high-risk systems.

Obligations and Penalties

Non-compliance with the AI Act can be extremely costly. Penalties can be as high as 7% of a company’s total global sales. They calculate this at the group level, not just for the EU branch.

From a commercial standpoint, early preparation and ensuring regulatory compliance are essential. By creating the right internal processes, companies can avoid big losses. This includes proper documentation and human oversight to prevent investigations or fines.

Practical Use Cases and Their Risk Classification

The EU AI Act states that AI technologies are not dangerous if there is proper oversight. The system should not be too autonomous. The impact on human rights and life opportunities primarily determines the level of risk.

HR Chatbot

Recommendation Assistant:

If an HR chatbot only suggests job postings based on a candidate’s preferences, it is seen as low-risk. The applicant makes their own decisions. The AI does not affect who moves to the next stage of recruitment.

Decision-Making Tool:

If the chatbot automatically filters out applicants based on scores or rules, it may be seen as a high-risk application. This is because it directly affects employment opportunities — a critical area where the EU requires transparency and human oversight.

AI Fraud detection

Support Model:

In banking and insurance, AI is frequently used to detect fraud more efficiently. As long as the system flags suspicious cases for human review, it remains in a lower-risk category. Humans retain final decision-making authority, so there is no automatic denial of claims.

Fully Autonomous System:

The risk level increases when AI automatically blocks transactions or claim payouts without any human review. This represents a more serious interference with individuals’ financial rights. Under the AI Act, such systems must provide model explainability and a right to human appeal.

Credit scoring

Predictive Algorithm:

Many banks use AI to assess loan applicants. However, a banker or risk manager still makes the final decision. In these cases, the system typically falls under a medium-risk category. Applicants have the right to request an explanation and speak with a human advisor.

Automated Decision-Making:

If the credit approval process completely removes human judgment, the algorithm determines who gets approved. People consider this system high-risk. In these cases, the AI Act requires organizations to show that the model has no bias. It also requires that someone keeps human oversight in place.

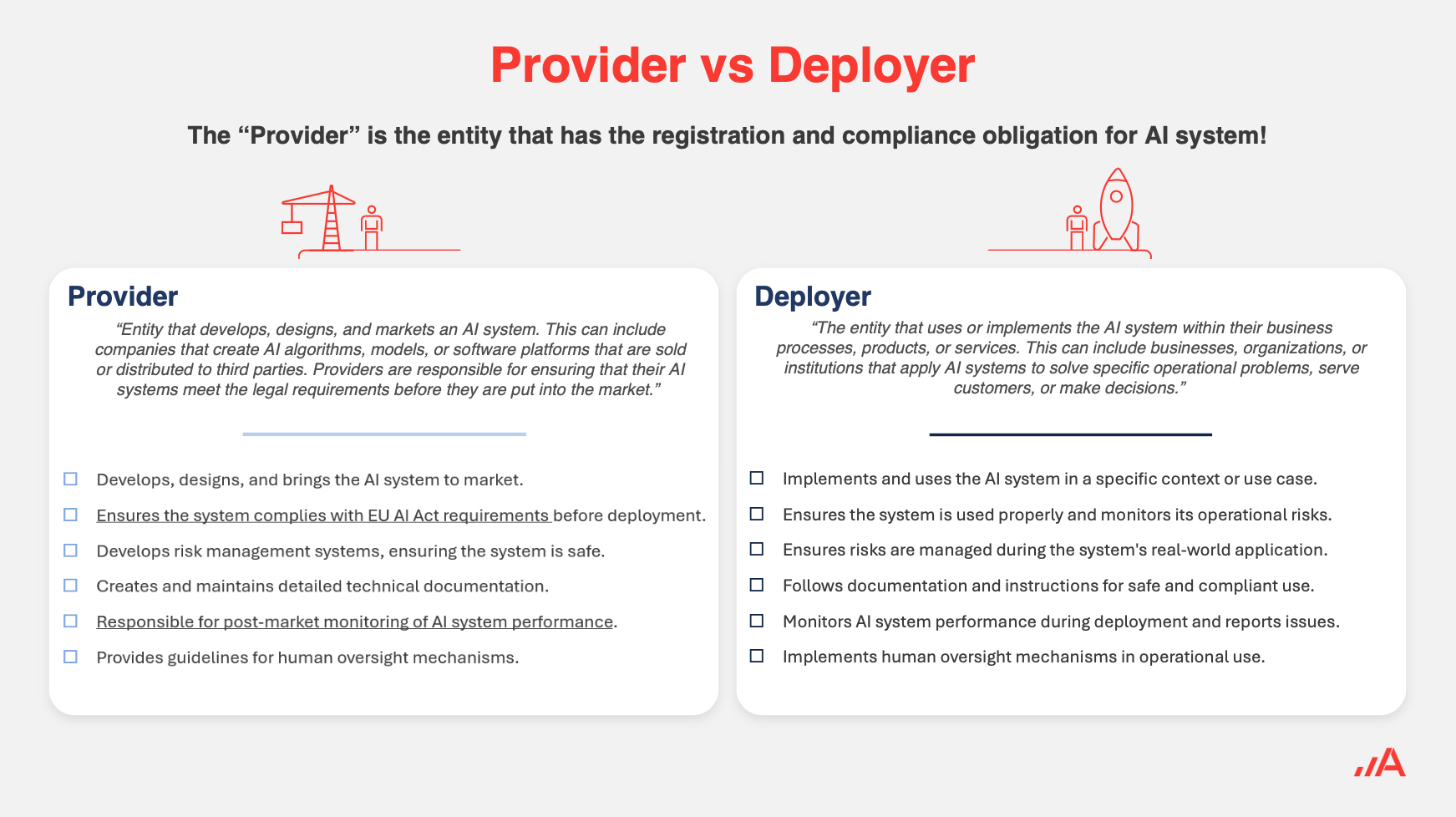

Roles in the AI Ecosystem: Provider vs. Developer

The EU AI Act clearly defines who is responsible for the development and operation of AI systems. The reason is simple: algorithms can greatly impact users’ lives. It is important to decide who is responsible for mistakes or problems.

Provider

This role applies to anyone who creates an AI system or makes big changes to it. This includes retraining the model or changing its main functions. A provider can be an external AI development company or your internal technical team.

If you qualify as a provider under the AI Act, you are required to:

- Ensure complete documentation of the model, including training data

- Conduct regular audits to demonstrate the system behaves safely and without bias

Developer

This role applies to any organization that uses and implements an AI system in its operations. Even if the developer did not help create the model, they are still responsible. They must ensure the AI system follows legal and ethical standards. This includes protecting data and communicating clearly with users.

In practice, this means that regulators may hold both the provider and the developer responsible. The developer is the one who puts the system into use.

Shared Responsibility and Multi-Level Governance

In real-world deployments, more than two parties are often involved. A typical setup might include a large cloud solution provider, a smaller system integrator, and the final business user.

For example, one company might create the main AI model. Another company uses it on a custom cloud setup. A third company provides it as a service to users.

In this chain, each party has different responsibilities under the AI Act. Together, they ensure complete oversight of the AI system.

Practical Example

Let’s take the implementation of an advanced chatbot in a bank:

Microsoft (provider) supplies the large language model (LLM)

An integrator customizes the model for the bank’s internal processes and data sources

The bank as the developer integrates the chatbot into its customer service platform

Each party is responsible for different areas of compliance:

Microsoft ensures the safety and robustness of the core AI technology

The integrator manages technical adaptation

The bank must make sure the chatbot talks to clients legally. It should not collect data without permission and must not mislead anyone.

The AI Act clearly outlines the main roles. However, only real-world use will show how responsibilities and potential liabilities are shared among stakeholders.

How to Prepare: AI Governance and Compliance in Practice

AI Governance Framework

More and more companies are building comprehensive AI governance frameworks to manage and control AI systems effectively. These frameworks typically include:

- Internal policies for AI development and deployment

- Methodologies for data validation, risk management, and human oversight

- Principles for data governance and bias mitigation

It’s important to align AI policies with your overall IT policies, ethical codes, and contracts. This ensures that AI projects meet legal and regulatory requirements from the start.

Organizational and Personnel Considerations

Much like the introduction of GDPR, companies can establish an AI board or appoint an AI compliance officer. This role oversees adherence to the AI Act and may be responsible for:

- Managing personal data processed by AI systems

- Overseeing model lifecycle, updates, and audits

- Communicating with executive leadership and relevant departments

Selecting and Purchasing AI Solutions

When acquiring AI solutions from external vendors, companies should:

- Prepare a checklist of key requirements related to safety and reliability

- Clearly define accountability for potential incidents

- Request evidence of training data quality and non-discriminatory behavior

Documentation and Audit Trail

For high-risk AI systems, the EU requires detailed documentation, including:

- Training data sets

- Test results

- Human oversight procedures

- Model versions and updates

- This audit trail helps with compliance during inspections. It also protects the company from damage to its reputation and legal risks.

EU AI Act Timeline and Next Steps

Key Milestones

- February 2025: Mandatory removal of all prohibited AI applications (e.g. manipulative or discriminatory systems)

- 2026–2027: Gradual enforcement of rules for high-risk AI systems (HR, healthcare, banking)

- By 2030: Full harmonization across all EU member states and full applicability of the AI Act

What You Can Do Right Now

If you don’t want to wait for local authorities or detailed implementation guidelines, there are several actions you can already take to prepare:

- Map Existing AI Systems

- Conduct a thorough inventory of all algorithms currently in use within your organization. For each, assess whether it is merely supportive software or a truly autonomous and adaptive AI system. Then classify them based on the AI Act’s risk categories.

- Prepare Internal Guidelines and Team Training

- Evaluate whether you already have a sufficient AI governance framework in place to guide AI development and deployment. Involve technical teams, legal counsel, HR, and management in the process. Don’t underestimate the importance of training — your staff should understand why these rules are being introduced and how they impact daily operations.

- Launch the Documentation Process

- Even if your systems are not currently considered high-risk, it’s wise to start building comprehensive documentation now. Keeping detailed records of AI development and deployment will help you respond to audits or regulator inquiries and prove how the technology operates and which processes it influences.

- Consult Legal Experts and IT Vendors

- Talk to your legal and IT teams to assess whether your current infrastructure (cloud setup, data flows, security measures) is ready to meet the new regulatory demands. The same applies to your contracts — especially if you rely on third-party AI models. Some agreements may need to be updated to comply with the AI Act.

If you’re already deploying AI systems that border on high-risk use cases, don’t delay your preparation. Each month you spend proactively addressing weak spots will help you avoid rushed, last-minute changes when the regulation officially takes effect.

Turning EU AI Act Regulation Into Strategic Advantage

When businesses hear the word regulation, they often think of added costs and limitations on innovation. But in the case of the EU AI Act, compliance can offer more than just risk mitigation — it can become a competitive advantage. By demonstrating that your AI systems are safe, transparent, and fair, you strengthen trust among partners, customers, and public institutions.

Trust and Brand Reputation

Transparent and ethical use of AI enhances your brand image. Customers and business partners are increasingly drawn to socially responsible organizations that prioritize fair and secure innovation.

Risk Management

Compliance with the AI Act reduces the risk of fines and reputational damage. A functional compliance system allows you to respond quickly to regulator demands or audits — helping you avoid crisis scenarios and maintain operational continuity.

New Business Opportunities

Companies that invest in AI-ready infrastructure and can prove the safety and fairness of their models are better positioned to:

- Establish international partnerships

- Attract corporate clients

- Secure funding from investors who require legal certainty and regulatory alignment

Start Sooner Rather Than Later

To maintain control and stay ahead of upcoming obligations, assemble a cross-functional team (IT, legal, HR, compliance) to assess your current state and prepare a roadmap for meeting EU AI Act requirements.

Begin your AI system inventory and staff training now — delays could cost you time, money, and your reputation in a rapidly evolving market.

How will the EU AI Act Impact Your Business?

Want to know more? Here is a webinar recording from our expert workshop. Gain critical insights into the regulation that is reshaping how businesses use AI across all sectors. Our session demystifies the new rules, offers actionable strategies for implementation, and helps you avoid the risk of steep fines.